Blog Posts

AI and the Arts (SPRING Festival Utrecht 2023) – Tom Watkins

** Link to event: UU & Academy: Open round table AI & the Arts (SPRING Academy and Utrecht University)

This AI and the arts round table discussion opened with the question, “What does AI do to the arts?” The interdisciplinary panelists offered insightful perspectives ranging from Dastani’s work with computational modeling of emotion to Vincs’ exploration of embodied movement through capturing the data of dancers. However, to speak on what AI can do for the arts is also to be cognizant of what is inherent in the art that AI cannot replace.

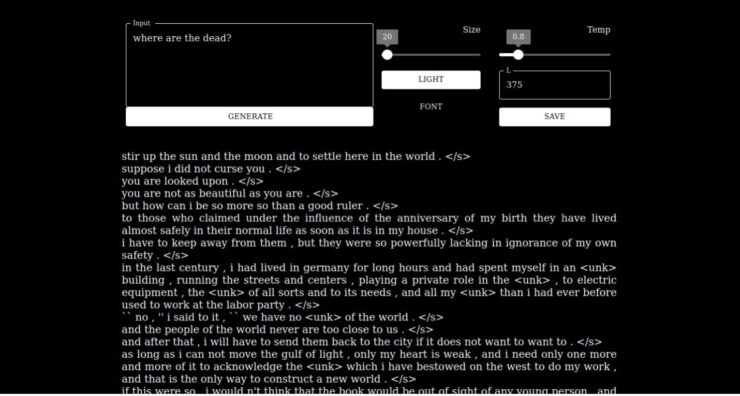

A work of art created by artificial intelligence.

Dastani reminds us that AI cannot replicate humanity’s uniqueness. Contrary to the predictive output of AI, humanity’s personal experiences shape our emotional, perceptual, and rational intelligence. Dastani adds that the physiological state of the artist informs intelligence in a way that AI cannot currently understand. This includes, on a broad level, one’s environment and, on a granular level, one’s biometrics. For example, before I moved to the Netherlands, I lived in a suburban house, removed from city life. I drove my car for sixty to ninety minutes daily to commute to the city to collaborate with other musicians. Now, living in Utrecht near the city center, surrounded by other students, living without a car, and commuting on foot or bicycle contains an inevitable busyness and immediacy. It influences not only my physiological state but also my music, my guitar playing, and my art. Every artist’s unique interaction with the world they live in and the path they followed in the past to arrive at their present inform intelligence and skill. While AI may reflect humanity’s data, these snapshots of intelligence cannot, in their current state, holistically replace or represent the constant unique journey of an artist’s intelligence.

Asa Horvitz project GHOST “We took books we loved connected to death and loss and memory and mourning, trained an AI system on them, and made music from the words it gave us.”

As a musicologist, I am concerned with how AI can synthesize improvisation based on learning the improvised solos of masters. As Asa Horvitz reminded us through his project GHOST and his work with memories and AI data as an archive of the permanent presence of the past, in the same way, resurrecting lionized jazz musicians through teaching AI programs their improvisatory style is, in my opinion, the plausible future. This will create a division between not only jazz genres but all genres. Jazz recordings will exist on streaming platforms as they do now, and AI jazz, where people can listen to, for example, how Louis Armstrong or Charlie Parker would sound today with high-fidelity recordings. In addition to higher fidelity recordings, AI jazz could offer novel improvisations based on aggregates of existing solos. As the future drifts farther away from the analog era of the 20th century, generations will become less concerned with whether the Armstrong they are listening to is real or AI-generated. They will only be concerned with how the recording sounds, and the quality of an AI-generated Armstrong will have higher fidelity than the scratchy, acoustic induction recordings of the 20th century. After all, if AI models use Armstrong’s playing to resurrect his recordings and perhaps provide novel syntheses of his improvisations, then one could argue that the information is not different from listening to the original recordings from the 1920s.

Chris Salter’s words about AI and machine intelligence brought into perspective the long history of research that brought us to where we are today with data-driven neural networks. He reveals three ways scholars have understood the brain and made sense of the world. The first line of thought was that brain activity is a top-down model that can be mimicked by rules and symbols, which causes humans to reason and make sense of the world. Scholars rebelled against this thinking shortly after by arguing for a bottom-up perspective. They saw intelligence as biologically driven with a history of ideas that interact over time. The third idea of intelligence, embodied AI, is that the world comes about because we interact with it. The feedback loop between how we interact with the world informs our intelligence.

Compared to the top-down and bottom-up perspective, this third and more recent line of thought has been integrated into robotics through sensors and video to inform AI’s decisions. In a way, one could argue that this may be a path for AI to make artistic choices based on their physiological and, indeed, their environmental state, something Dastani discussed as solely inherent to human art. If biometrics and volume metrics, a core aspect of Vincs’ work, are increasingly incorporated into robotics and AI, this will further blur the line between human and machine art. As we continue to traverse this plain from analog to digital art, as artists, we must continually ask ourselves how AI will be socially constructed. How can it be a tool, not a replacement for the human artists and art we value? Perhaps this question reflects my bias as a scholar educated in and encultured by American and Western institutions. Indeed, Salter reminds us that the development of neural networks and artificial intelligence evolved from the minds of White, Western males. The social relevance of AI differs from those of scholars and researchers in Japan, where androids are not necessarily viewed as replacements. However, globally, academics and researchers must equally address and protect the concepts baked into our art that provide humanity’s compassion, empathy, and connectedness from robbing human uniqueness and providing a new level of data exploitation for capitalism. Without these distinctions, what separates humankind from the AI we are researching? It is perhaps too soon for anyone to know the answer to these questions, yet they are questions we must not forget.

Works Cited

Horvitz, Asa. “GHOST.” Accessed May 29, 2023. https://www.asahorvitz.com/ghost.